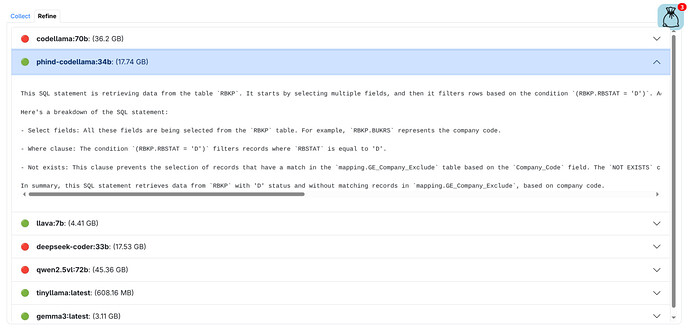

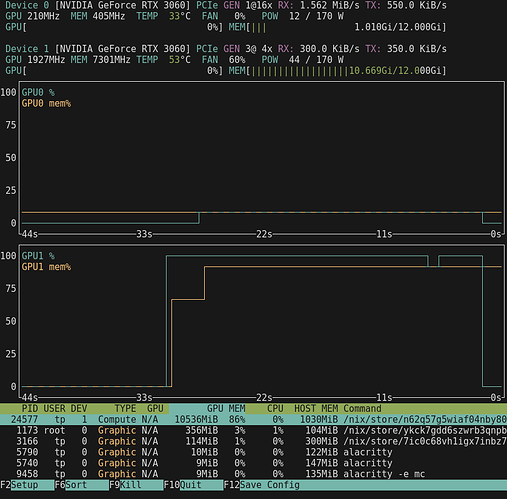

Now that I’ve seen the AI light, (see Manus AI solved … ) I’m building a low cost PC that should run a xxxx:35b AI Image at decent speed. Currently my favorite AI is qwen2.5-coder:32b which on a single RTX3060 also uses all my 64GB ram and 6 CPUS, but is still very slow at about one word per second.

This will also be my main PC which I use for AI, electronics design, coding, browsing, watching videos and maintaining my Forth user documentation at https://mecrisp-stellaris-folkdoc.sourceforge.io/.

I’m also turning my current PC into a NAS for all my data storage. I already have at least 10TB of ZFS data stored!

So I’ve listed all the components below for the entertainment of the hardware heads here ![]()

PC Hardware

- 2x RTX3060 GPU’s which are new ‘oldies’ but still current and the cheapest half decent units available. They max out at 170 Watts each.

- MSI B550-A Pro Motherboard because it has two PCIE-16 slots for the two RTX3060 GPU’s

- AMD Ryzen 5500 CPU, cheapest 6 core CPU available at $130

- 4x Corsair Vengeance LPX 64GB (2x32GB) 3200MHz CL16 DDR4 for 128GB ram

- 1x Crucial P3 M.2 NVMe SSD 1TB for the system and some local storage

- 2x TP-Link 2.5 Gigabit PCIe Network Adapters one for this PC, the other for the NAS. They will be a separate dedicated subnet link, PC to NAS only. I’d have gone for 10GB NIC’s but the NAS is FreeBSD and I’m not sure it can support the readily available TPlink 10GB NIC’s. The AI PC will run Debian or Ubuntu Linux, or even Fedora, because Linux has excellent Ollama support. I haven’t decided which Distro to use yet yet.

- 650 Watt PSU Corsair HX650, I purchased 8 units in 2014 after spending a week reviewing PSU’s online. This one had the best internal layout, heatsinks and cooling I could find.

- Ethoo Pro case: I bought it about 7 years ago, it’s still new and unboxed. The existing PC which will be the NAS is in the same model of case.

NAS

- FreeBSD because of its ZFS file system support. using two 250GB SSD’s in ZFS Raid Mirror for the system files.

- Ryzen 3600 CPU (older cheaper at $130) which runs this PC, and is really good.

- ASUS TUF B450M II Pro Gaming Motherboard now in use for the last 2 years with no problems.

- 20TB of ZFS HDD (these are Seagate “Ironwolf”) These drives feature CMR technology, which is mandated for ZFS use. Two 4TB drives are ZFS RAID Mirror for all my projects and vital data) with two 8TB drives for general storage (non raid).

- Older Nvidia GTX660 GPU, purchased in 2014 its been super reliable. I need it because the Ryzen 3600 doesnt have a GPU.

- PSU 650 watt, same Corsair HX650 as the AI PC.

- TP-Link 2.5 Gigabit PCIe Network Adapter as described above

- 32GB of RAM (its only a NAS, but ZFS is ram hungry)

- Ethoo pro case (now about 10 years in daily service and still perfect, no rust etc)

- Basic CLI only FreeBSD access for the user

The main plan is to have a reasonable PC that will do AI, design and general Internet use etc, and a NAS with decent response times, ZFS and decent backup strategies (inherent with ZFS).

So nothing special or super expensive, no dual 64 core Core Intel Xeon server CPU’s (looking at David ![]() )

)

Gaming ? At 70+ years of age, I haven’t gamed for years, not because I can’t but because I simply enjoy design and programming more. Also probably because my ego couldn’t handle every teenager I game against online wiping me out in 30 seconds ![]()