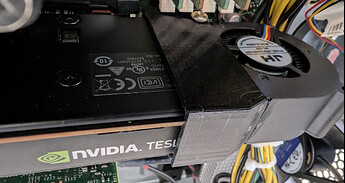

The Tesla P4 arrived earlier today. The card doesn’t come with any cooling as it’s meant to be cooled by the airflow in rack mount servers, so I also bought a blower fan and 3D printed bracket from eBay last week so I could run the card in a tower machine. This slots neatly onto the end of the P4 and contains a 4-pin fan which connects directly to the P330’s motherboard and forces air through the P4’s heatsink. I probably could have pulled something from the parts bin and cobbled something together, but this was a neat pre-made solution for US$12.99 posted (exactly AU$20.10 after the exchange rate). I’ve updated the $520 cost to $540 to reflect the addition of the blower fan. Pic attached below.

I’ll also note for anyone going down this route - save yourself going slightly mad going around in circles with unrelated and nondescript errors when trying to get the CUDA kernel modules running for that card - disable Secure Boot ![]() .

.

Disclaimer: There have been updates to Ollama (0.9.0), the DeepSeek R1 14B model, and the Nvidia drivers since my initial tests in my original post. The results will not be directly comparable, but will still provide an idea of the difference in performance.

As expected, the $500 challenge build performed far better with the Tesla P4 8GB card installed.

Using Debian 12, Ollama v0.9.0, and the deepseek-r1:14b model I experienced a prompt eval rate of 18.09 tokens/s, and an eval rate of 5.59 token/s.

For the purpose of comparison only, I ran the same test on my MacBook Air M4 with 32GB RAM. Unsurprisingly, it was the fastest configuration tested. I was shocked to see the difference in prompt eval rate between the M1 and M4. If anyone is interested, I’ll rerun the M1 test using Ollama 0.9.0 and the latest version of the DeepSeek R1:14b model to see whether they’ve been somehow better optimised for Apple M-series processors in the updates last week.

Using macOS Sequoia 15.5, Ollama v0.9.0, and the deepseek-r1:14b model I experienced a prompt eval rate of 53.02 tokens/s, and an eval rate of 10.74 token/s.

Updated - Summary of results

| HP Z220 + GTX 1050Ti | MacBook Air M1 | Lenovo P330 G2 (No GPU) | P330 G2 + Tesla P4 | MacBook Air M4 | |

|---|---|---|---|---|---|

| Approx. Cost (As tested) | $400 | $550-700+ | $360 | $540 | $2899 |

| Prompt Eval Rate | 5.07 t/s | 1.83 t/s | 8.55 t/s | 18.09 t/s | 53.02 t/s |

| Eval Rate | 2.45 t/s | 6.08 t/s | 3.24 t/s | 5.59 t/s | 10.74 t/s |

I’ve done no performance tuning with any of these configurations but will probably muck around with the P330 a little more to see if I can squeeze that eval rate to above 6.25 tokens/sec.

Draw your own conclusions from my anecdotal experiment, but hopefully I’ve managed to put some substance behind the “for the cash outlay, a new (second hand/ex lease etc) PC is probably a far better starting point” comment a few weeks ago - one can build something pretty good for the homelab for around $500.

Please let me know if you’d like me to run any specific tests with any specific models. The completed P330 will be moved off the workbench to join the rest of the homelab equipment and will have OpenWebUI (in a Proxmox VM) connected to the Ollama API in the coming weeks as I have some more time.