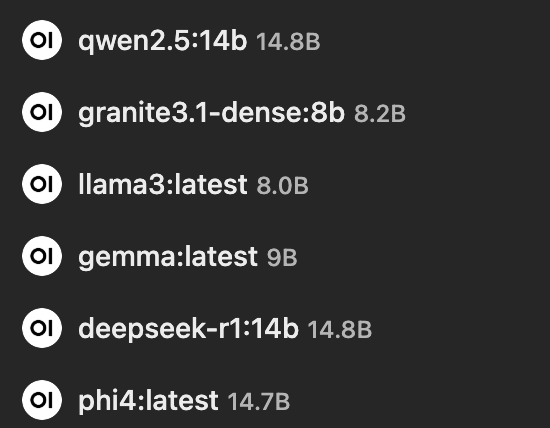

I now have Deepseek-R1:14b (9GB image) running locally on this pc, here is my story.

Firstly, tho Freebsd has a Ollama package, the Ollama server it builds is rubbish, don’t waste your time.

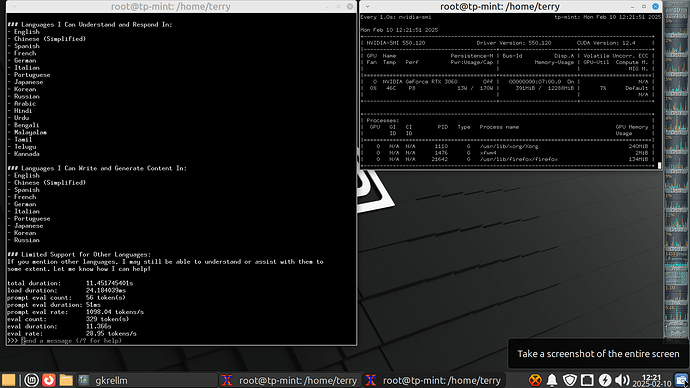

My current Deepseek local install is 100% reliable, instrumented and fast!

- PC: a lowly AMD Ryzen 3600 with 6 cores and 6 threads, 64GB DDR4 ram.

- A 240GB NVMe card

- Nvidia RTX3060 with 12GB Vram ($450 aud)

Software:

- The latest Linux MINT (xfce4)

- Ollama install script from ollama.com

I followed this Youtube tutorial: https://www.youtube.com/watch?v=wLTaQ9scs0E which is very complete in my opinion.

Linux Mint made the operation a breeze as it offers the Nvidia driver after the installation is complete tho it boots using the Noveau one which runs fine for video, but is useless for AI. In that case ollama installed and ran using the CPU but was dead slow.

The result is a fast local AI, it is so fast I can’t read the chat window text as it scrolls up. It easily replaces google in my view, and like my sister, it seems to know about everything!

Keeping your data local is a big advantage these days in my opinion, not to mention there are no adverts ![]()

Ollama installed Deepseek as a service, then ran it. It also made a Group of “ollama” so you don’t need to be root to access the AI.

Once it has finished, you just run ‘ollama run --verbose deepseek-r1:14b’ if that’s the model you chose and it should work flawlessly.

Deepseek feels like a tireless assistant who actually knows a thing or two, I highly recommend it.