Excellent question, @jdownie from over a year ago. Let’s find out  . It took me a year to come back to “I’ll try it in an LXC container”, but I got there eventually.

. It took me a year to come back to “I’ll try it in an LXC container”, but I got there eventually.

Wasn’t sure whether to break the Linux kernel stuff out into another thread, but I’ll leave it here as it is GPS/PPS/NTP related and I know search engines (and no doubt AI) scrape this site and it may be useful to someone else out there in internet land. It also forces me to try and retrace my steps over the past week, and gives me some documentation in case I need to remember what I did at some stage too  .

.

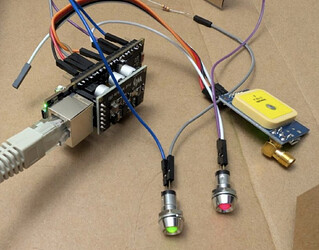

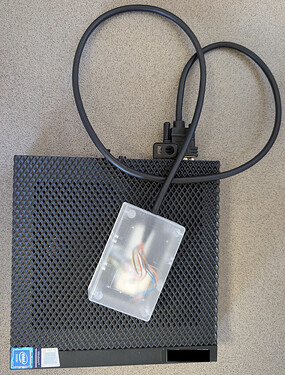

Over the past week I’ve experimented with several configurations on the Wyse 5070 + GNSS module, with the ultimate goal of virtualising or containerising the NTP/PTP side of it. I know it shouldn’t ever be ever moved off the bare metal, but it was an interesting challenge to work through, and I was motivated by my desire to move Home Assistant and DNS to this machine and set it up as a bit of a low power “mission critical services” box, while keeping it as “standard Proxmox VE 9” as possible. I’ve now got it running very nicely under LXC on PVE 9, and will gradually move a few other VMs and containers across over the coming month while keeping an eye on the NTP stability. Yes, I could have run NTP on the Proxmox host itself, but I wanted to try and keep the NTP stuff separate if I could, so that it was nicely bundled up in something I could effortlessly back up, move between hardware (if need be), and also be more resilient to potential future Proxmox upgrades that may break something I’ve hand configured. The headline take away is don’t virtualise/containerise this stuff, but if you still want to do it anyway, it is possible to get something pretty solid going.

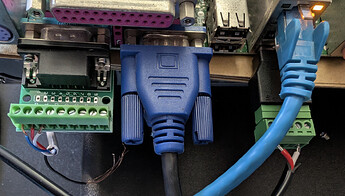

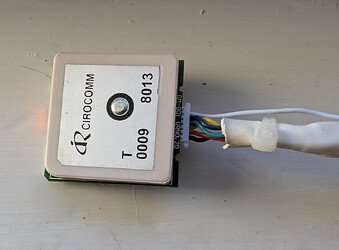

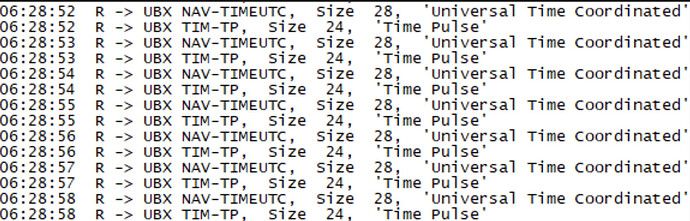

On the project side, I moved from UBX to NMEA as I was getting occasional failed packet decodes and wanted to eliminate some variables. I also cross checked the cable shielding was grounded, and added ferrite beads to the cable between the NaviSys unit and the 5070 to try and help with the occasional corrupted packet, but I don’t think those actions had any measurable impact overall.

I am not at all an expert in any of the kernel stuff, and was just muddling my way through incrementally trying options. If you spot something wrong or see an avenue for improvement or for me to learn, please let me know!

Having said all that, here is some tuning info that may be of interest to anyone building an NTP server, or who wants to containerise latency sensitive projects more generally:

On the Proxmox host, I set up a systemd unit to attach PPS from the NaviSys module:

[Unit]

Description=PPS from /dev/ttyS0

After=dev-ttyS0.device

Requires=dev-ttyS0.device

[Service]

Type=forking

ExecStart=/usr/sbin/ldattach pps /dev/ttyS0

Restart=always

[Install]

WantedBy=multi-user.target

This creates /dev/pps1 (pps0 being attached to the PTP capable network card) and kicks off the Linux internal kernel PPS. In a VM, I had some weirdness where asserts would arrive fine, and clears would arrive with stale timestamps. No idea what that was about, but it seems fine in a container. Can’t explain that one at all. I don’t think chrony uses the clears anyway, but it was still… odd.

Also on the Proxmox host, I changed the NTP server from the NTP pool to my (soon to be local) NTP server to avoid the host changing the system time out from under the NTP server in the container. It took me far longer than I care to admit to figure out where an occasional sudden time change would come from  .

.

New privileged LXC container (ID 123) and the conf file edited per the below:

arch: amd64

cores: 1

features: nesting=1

hostname: xxxxxxx

memory: 2048

ostype: debian

rootfs: local:123/vm-123-disk-0.raw,size=8G

swap: 512

lxc.net.0.type: phys

lxc.net.0.link: enp2s0

lxc.net.0.flags: up

lxc.cgroup2.cpuset.cpus: 3

lxc.cgroup2.devices.allow: c 246:* rwm

lxc.mount.entry: /dev/pps1 dev/pps1 none bind,optional,create=file

lxc.cgroup2.devices.allow: c 4:64 rwm

lxc.mount.entry: /dev/ttyS0 dev/ttyS0 none bind,optional,create=file

lxc.cap.drop:

lxc.apparmor.profile: unconfined

Waaaaaaay more RAM and swap than I needed (it hasn’t exceeded 50MB since stabilising) but I’ll leave it be for now. I also experimented with an unprivileged container and had some odd issues, so I went back to privileged. Most of it is self explanatory, but some key things from the above:

nesting=1 (Debian won’t spawn a login prompt without it, and no doubt other stuff is broken too. It warns you about this, so it’s probably not something you’d choose to do, but I experimented without it so I’ll highlight that yes, you do need it).lxc.apparmor.profile: unconfined honestly no idea if this is necessary or not. I never ran into any AppArmor issues in early experiments, and this ended up being a way to reduce the number of variables more than anything.lxc.cgroup2.cpuset.cpus: 3 Pin the LXC container to Core 4 of the Celeron J4105 [zero indexed, i.e., it has cores numbered 0-3] in the Wyse 5070 (more on this later).lxc.net... Pass through the Intel i210lxc.cgroup2.devices.allow: c 246:* rwm + lxc.mount.entry: /dev/pps1 dev/pps1 none bind,optional,create=file Pass through /dev/pps1. Essentially, allow the LXC container to access all devices of type 246 (PPS), and then mount pps1 into the container, make it optional for container start up (in case something breaks), and create a file in /dev/ if it’s not there.lxc.cgroup2.devices.allow: c 4:64 rwm + lxc.mount.entry: /dev/ttyS0 dev/ttyS0 none bind,optional,create=file As above, pass through /dev/ttyS0 (for UBX or NMEA data). Major device type 4 is tty and 64 is ttyS0 specifically.lxc.cap.drop: Override the default LXC capabilities that Proxmox drops from the container (by blanking out that line). In this case, I want to allow the container to do a bunch of stuff containers shouldn’t normally be allowed to do (e.g., change sys_time).

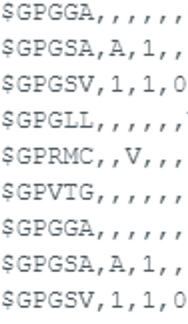

Container created, gpsd + chrony installed, as per the million and one tutorials already on the internet. ppstest shows healthy asserts and clears, and cgps shows the data from the GNSS module coming through as per the above post.

/etc/default/gpsd and /etc/chrony/chrony.conf were fairly standard, but I’ll add the following notes.

My refclock lines in chrony.conf were:

refclock SOCK /var/run/chrony.ttyS0.sock delay 0.05 refid GNSS poll 3

refclock PPS /dev/pps1 lock GNSS refid PPS poll 0 prefer

For anyone following along at home, essentially the PPS signal is an accurate trigger of when a second boundary happens, but the data as to which second that is/was comes in via GPS/GNSS (or another NTP server or time source, for that matter). PPS needs to be as accurate as possible, the other time source can be slightly out.

I tried using SHM initially, but found SOCK to be much less delay and far less jittery. This seems to be the “more modern” way of communicating between gpsd and chrony anyway. delay 0.05 added about 36 hours ago as the 50ms delay between the GNSS data and PPS was pretty consistent on my system.

In gpsd:

DEVICES="/dev/ttyS0"

# Other options you want to pass to gpsd

GPSD_OPTIONS="-n -s 115200 -b"

# Automatically hot add/remove USB GPS devices via gpsdctl

USBAUTO="false"

GPSD_GROUP="dialout"

Not much here beyond the default or self-explanatory, but “-n” = no wait, run all the time (will be standard in any NTP tutorial), “-s 115200” (baud rate, previously programmed into the module). I did several experiments with and without -b, and ended up adding it again (after reprogramming the module back to my own config) to eliminate more variables. -b is “broken device mode” and essentially puts gpsd into read only mode rather than allowing gpsd to send commands to the module to change configs. I have no idea if I was running into odd firmware quirks with my module, but I found that life was better with the module being left alone, in my case. Likewise, the dialout group add was added at one stage due to some odd permissions issue I was having with the serial port. Not sure if it needs to be there, but it didn’t hurt to leave it there, so I have left it.

NIC and various routers/firewalls at home configured as one would normally configure a NIC/routers/firewalls. I also added GitHub - TheHuman00/chrony-stats: Lightweight : Monitoring Chrony and Network and Debian -- Details of package micro-httpd in trixie to the container to get some remote stats so I could obsess over every config tweak while it settled  .

.

Back to the host:

/etc/grub/default edited to include the following:

GRUB_CMDLINE_LINUX_DEFAULT="quiet intel_iommu=on iommu=pt isolcpus=3 nohz_full=3 rcu_nocbs=3"

I added iommu=pt during my VM experiments, but don’t think it’s technically needed for LXC containers. Didn’t hurt to leave it there, so I left it.

isolcpus=3 nohz_full=3 rcu_nocbs=3 is arguably the more interesting part. Essentially, remove background tasks from Core 3 and remove Core 3 from the SMP “pool” for the machine to use, remove scheduling ticks from Core 3, and remove Read-Copy-Update background tasks from Core 3. Essentially, we want as much stuff removed from Core 3 as possible, so that the LXC container pinned to Core 3 has the full use of it for itself.

Lastly, on the host, cat /proc/interrupts shows what interrupts are happening on each CPU core. I won’t reproduce the whole lot here, but we want to pin the interrupts for /dev/ttyS0 and /dev/enp2s0 to Core 3, and move as many other interrupts off Core 3 as possible too.

In my case, that’s IRQ 4 (/dev/ttyS0) and IRQs 131-135 to Core 3, and the rest of cat /proc interrupts off Core 3 (I chose Core 0).

Won’t reproduce the whole lot here, but as examples:

echo 8 > /proc/irq/4/smp_affinity to move IRQ 4 to Core 3 (binary 1000 = decimal 8 = “Use this core? YNNN”)echo 1 > /proc/irq/136/smp_affinity to move IRQ 136 to Core 0 (binary 0001 = decimal 1 = “Use this core? NNNY” - 136 in my case being enp1s0, i.e., unrelated hardware to the NTP container)

I’ll probably slap all that into a shell script to run at boot at some stage, but for now I’m just being really lazy and redoing it from .bash_history each time on the very rare occasions the Proxmox host needs to reboot. It also allows me to double check that the IRQs haven’t changed as I’ve been mucking around.

cat /proc/interrupts after the changes should show the interrupt counts incrementing on the appropriate core only from this point.

I also checked irqbalance wasn’t running (or installed. Doesn’t seem to be in a fresh PVE 9 install). I didn’t add irqaffinity or kthread_cpus into the GRUB boot parameters as I figured I didn’t want to accidentally stop the kernel (and therefore the PPS and LXC) from sitting on that core at all, nor remove default IRQs from core 3 entirely. I thought it’d be better to tinker after boot using smp_affinity, but I’m so far down into the weeds and outside my own area of knowledge at this point. Happy to learn from anyone out there more familiar with this than I am!

I also didn’t go to a RT kernel on the host, as I know Proxmox really wants to use its own version of the kernel and I wanted to keep Proxmox as stock standard as possible.

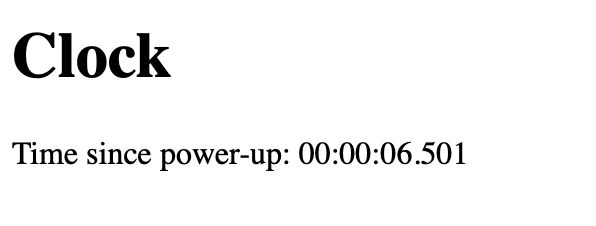

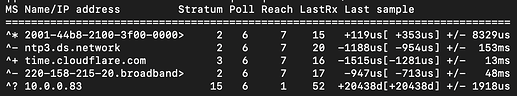

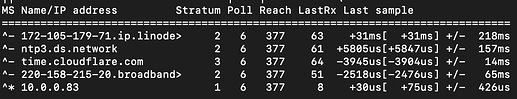

So, how is it performing?

In short, it’s accurate to within ~3–10 µs (~0.000003 to 0.000010 seconds) of UTC, and very stable. Not quite as tight as my bare metal experiments, but pretty close. Certainly close enough for a homelab Stratum 1 NTP server, and something that plays nicely with Proxmox.

Definitely given me a good source for home, and something to benchmark against when I come back to the ESP32 version of the project. For now, I will go outside and do some non-NTP related things  .

.

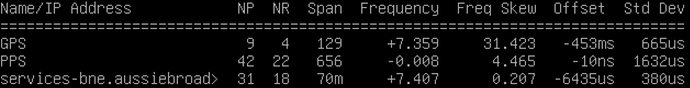

chronyc sourcestats -v

.- Number of sample points in measurement set.

/ .- Number of residual runs with same sign.

| / .- Length of measurement set (time).

| | / .- Est. clock freq error (ppm).

| | | / .- Est. error in freq.

| | | | / .- Est. offset.

| | | | | | On the -.

| | | | | | samples. \

| | | | | | |

Name/IP Address NP NR Span Frequency Freq Skew Offset Std Dev

==============================================================================

GNSS 29 15 224 +0.493 0.171 +12us 16us

PPS 64 28 63 -0.007 1.375 -2ns 58us

services-bne.aussiebroad> 7 4 1167 +4.084 1.922 -837us 374us

time.cloudflare.com 6 4 453 +12.950 39.818 -2860us 1347us

tic.ntp.telstra.net 11 6 172m +4.164 0.581 -1960us 1493us

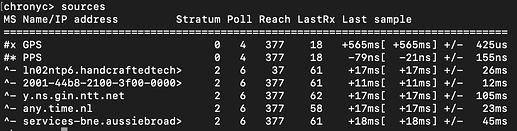

chronyc sources -v

.-- Source mode '^' = server, '=' = peer, '#' = local clock.

/ .- Source state '*' = current best, '+' = combined, '-' = not combined,

| / 'x' = may be in error, '~' = too variable, '?' = unusable.

|| .- xxxx [ yyyy ] +/- zzzz

|| Reachability register (octal) -. | xxxx = adjusted offset,

|| Log2(Polling interval) --. | | yyyy = measured offset,

|| \ | | zzzz = estimated error.

|| | | \

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

#- GNSS 0 3 377 10 +5227ns[+3784ns] +/- 25ms

#* PPS 0 0 377 0 -31us[ -32us] +/- 30ns

^- services-bne.aussiebroad> 2 6 377 26 -3186us[-3185us] +/- 30ms

^- time.cloudflare.com 3 6 377 1 -6558us[-6559us] +/- 16ms

^- tic.ntp.telstra.net 2 10 377 221 -2703us[-2638us] +/- 37ms

chronyc selectdata -v

. State: N - noselect, s - unsynchronised, M - missing samples,

/ d/D - large distance, ~ - jittery, w/W - waits for others,

| S - stale, O - orphan, T - not trusted, P - not preferred,

| U - waits for update,, x - falseticker, + - combined, * - best.

| Effective options ---------. (N - noselect, P - prefer

| Configured options ----. \ T - trust, R - require)

| Auth. enabled (Y/N) -. \ \ Offset interval --.

| | | | |

S Name/IP Address Auth COpts EOpts Last Score Interval Leap

=======================================================================

P GNSS N ----- ----- 10 1.0 -25ms +25ms N

* PPS N -P--- -P--- 0 1.0 -32us -32us N

P services-bne.aussiebroad> N ----- ----- 25 1.0 -32ms +26ms N

P time.cloudflare.com N ----- ----- 0 1.0 -22ms +9025us N

P tic.ntp.telstra.net N ----- ----- 220 1.0 -40ms +30ms N

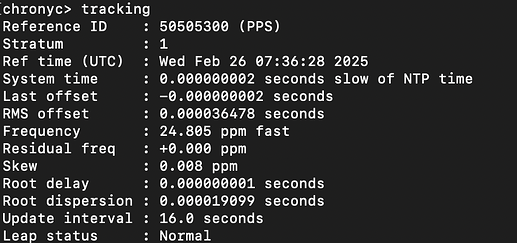

chronyc tracking

Reference ID : 50505300 (PPS)

Stratum : 1

Ref time (UTC) : Wed Feb 25 00:30:00 2026

System time : 0.000003012 seconds slow of NTP time

Last offset : -0.000000811 seconds

RMS offset : 0.000004714 seconds

Frequency : 4.430 ppm fast

Residual freq : -0.007 ppm

Skew : 1.408 ppm

Root delay : 0.000000001 seconds

Root dispersion : 0.000017366 seconds

Update interval : 1.0 seconds

Leap status : Normal

References: